Imagine waking up to your morning routine orchestrated perfectly—lights gradually brighten, the coffee maker starts brewing, and your assistant greets you with today’s schedule. But here’s what makes this scenario truly revolutionary: none of your personal data left your home, and every command was processed without a single byte touching the cloud. Welcome to the era of voice assistants with local AI that learn your routines offline, where privacy and personalization finally coexist.

For years, we’ve traded convenience for confidentiality, allowing our daily patterns, voices, and even accidental conversations to be stored on distant servers. The promise of truly intelligent homes has been shackled by internet connectivity requirements and legitimate privacy concerns. But a fundamental shift is underway. New generations of voice hubs are embedding sophisticated neural networks directly into your home hardware, enabling them to understand, learn, and anticipate your needs while keeping your most intimate data exactly where it belongs—under your roof. This isn’t just about avoiding internet outages; it’s about reclaiming ownership of your digital footprint while building a smarter, more responsive living environment.

Top 10 Voice Assistants with Local AI

Detailed Product Reviews

1. AI Voice Recorder with App Control, Advanced AI Technology for Transcription & Summarization, 64GB Memory, Magnetic Case, Supports 50 Languages – Audio Recorder for Lectures, Meetings, Interviews

Overview:

The AI Voice Recorder with App Control positions itself as an affordable yet powerful documentation tool for students, journalists, and professionals. At $49.99, it delivers GPT-4o powered transcription and summarization in a sleek aluminum alloy body with a magnetic case, making it highly versatile for lectures, meetings, and interviews.

What Makes It Stand Out:

The 1-year premium membership with unlimited transcription time is a standout value proposition that competitors rarely match. Combined with 64GB internal storage and 35-hour battery life, it eliminates common pain points of capacity anxiety and frequent charging. The magnetic case enables creative hands-free placement, while the dual-microphone system (MEMS silicon and bone conduction simulation) promises superior voice clarity.

Value for Money:

This device punches well above its weight class. Similar AI-powered recorders typically start at $80-100 without including unlimited transcription. The inclusion of GPT-4o technology at this price point makes it one of the best entry points into AI-assisted documentation.

Strengths and Weaknesses:

Strengths include exceptional battery life, generous storage, robust AI capabilities, and premium build quality. The magnetic design adds practical flexibility. Weaknesses are the limited 50-language support (far fewer than competitors), potential dependency on the DOWAY app’s longevity, and unknown brand reputation for long-term software updates.

Bottom Line:

For budget-conscious buyers seeking cutting-edge AI transcription without subscription fees, this recorder is a compelling choice that delivers professional-grade features at an entry-level price.

2. Yahboom AI Voice Recognition Module Voice Broadcast Integrated Custom Wake-up Word Programmable Sound Sensor Support Jetson/Raspberry Pi/ESP32/STM32

Overview:

The Yahboom AI Voice Recognition Module is a specialized development board designed for engineers and hobbyists building voice-controlled hardware projects. Unlike consumer voice recorders, this $18.99 module integrates directly with microcontrollers like ESP32, Raspberry Pi, and STM32, offering professional-grade voice processing for custom applications and IoT devices.

What Makes It Stand Out:

The CI1302 chip’s 99% recognition accuracy with built-in echo cancellation and noise reduction is impressive for a budget module. Its web-based command editing system supports 110+ preset commands with multi-language options, while the open-source approach and ROS1/ROS2 SDK support make it exceptionally versatile for robotics and smart home projects requiring custom wake words.

Value for Money:

At under $20, this module is remarkably affordable for developers. Comparable voice recognition modules typically cost $30-50, making Yahboom’s offering a cost-effective solution for prototyping and small-scale production environments.

Strengths and Weaknesses:

Strengths include high customization, broad development board compatibility, plug-and-play interface design, and excellent technical support resources. The 99% accuracy claim is backed by professional-grade noise suppression. Weaknesses include Windows-only firmware burning software, steep learning curve for non-technical users, lack of built-in storage or battery (requires external hardware), and no consumer-ready packaging.

Bottom Line:

This is an outstanding tool for developers and makers but unsuitable for casual users seeking an out-of-the-box recording solution. It excels in custom hardware integration scenarios.

3. AI Note Voice Recorder: Transcribe, Summarize & Text Translation, 152 Languages, AI Noise Cancellation, 64GB, APP Control, One-Step Magnetic Attachment, Long-Lasting Battery – Meeting, Call, Lecture

Overview:

The AI Note Voice Recorder is a premium device that leverages multiple cutting-edge AI models to transform audio into actionable insights. At $99.99, it targets professionals and students who need more than basic transcription, offering features like mind mapping, speaker distinction, and interactive Q&A with recordings through the companion app.

What Makes It Stand Out:

This recorder uniquely combines GPT-5.0, Gemini 2.5 Pro, and o3-mini models, providing unparalleled AI versatility. The 152-language support is class-leading, while the innovative call recording mode (attaching to your phone) and mind map generation set it apart from conventional recorders. The hybrid Pro Mic System with AI noise cancellation blocking 90% of background noise ensures exceptional clarity in any environment.

Value for Money:

While nearly double the price of budget alternatives, the multi-model AI approach and advanced features justify the cost for power users. The 1-year unlimited transcription and processing add significant value, though some competitors offer similar subscriptions at lower hardware prices.

Strengths and Weaknesses:

Strengths include cutting-edge AI integration, superior language support, versatile recording modes, excellent noise cancellation, and 64GB storage. The ultra-slim, card-sized design enhances portability. Weaknesses are the premium price point, potential feature overload for simple use cases, and dependence on multiple AI services that may have varying performance.

Bottom Line:

Ideal for tech-savvy professionals and students who need maximum AI capability and language support. The advanced features merit the investment for those who’ll utilize them fully.

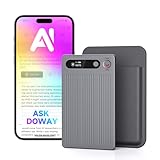

4. RECOLX AI Voice Recorder & Transcriber with GPT-5.2 Analysis – 30-Hour Recording, 112-Language Speech-to-Text & Auto Summary for Meetings, Lectures & Interviews,Grey

Overview:

The RECOLX AI Voice Recorder & Transcriber strikes a balance between advanced AI capabilities and practical usability. Priced at $75.99, it offers GPT-5.2 powered transcription across 112 languages with a streamlined workflow for meetings, lectures, and interviews. The grey aluminum finish gives it a professional aesthetic suitable for corporate environments.

What Makes It Stand Out:

The device leverages multiple AI models including GPT-4o/5/5.2 and Gemini-3-Pro, providing robust transcription and summarization. Its 30-hour battery life ensures all-day reliability, while the slim, pocket-friendly design emphasizes portability. The USB-C connectivity enables quick file transfers to laptops and cloud services, streamlining post-meeting workflows.

Value for Money:

Positioned in the mid-range segment, this recorder offers excellent value by combining latest-generation AI with practical hardware. It’s more affordable than premium models while delivering superior AI capabilities compared to budget options, making it accessible to serious students and professionals.

Strengths and Weaknesses:

Strengths include multi-model AI support, impressive battery life, strong language coverage, intelligent noise reduction, and portable design. The 112-language support covers most use cases effectively. Weaknesses include unspecified internal storage capacity, lack of unique features like mind mapping or call recording, and a generic design that doesn’t stand out. The brand recognition is also lower than established competitors.

Bottom Line:

A solid, no-nonsense choice for professionals seeking reliable AI transcription without breaking the bank. It delivers core functionality exceptionally well.

5. Archer AI Voice Recorder Earpiece, Wireless Single Ear Bluetooth Headset, Meeting Assistant with Transcription, AI Noise Canceling 50dB, AI Transcribe & Summarize with App for Office/Meeting/Driving

Overview:

The Archer AI Voice Recorder Earpiece revolutionizes the category by combining a Bluetooth headset with AI transcription in a wearable 13g package. At $149, it’s the most expensive option but offers unmatched convenience for mobile professionals who need hands-free recording and noise cancellation during calls, meetings, or while driving.

What Makes It Stand Out:

The industry-leading Oleap AI VoiceOn technology suppresses 50dB of background noise—far exceeding typical 20-30dB ratings—ensuring crystal-clear recordings even in chaotic environments. The 4-in-1 design eliminates the need for separate earbuds, recorders, and notebooks. Four recording modes (Calls, Media Voice, Personal Memos, Ambient Sound) provide versatility, while the ergonomic ear hook enables all-day wear without pressure.

Value for Money:

The premium price is justified by its unique form factor and exceptional noise cancellation. For executives, salespeople, and field workers, the convenience outweighs the cost. However, those needing extended local storage may find better value elsewhere, as this prioritizes portability over capacity.

Strengths and Weaknesses:

Strengths include revolutionary wearable design, best-in-class noise cancellation, ultra-lightweight comfort, encrypted local storage, and hands-free operation. The Bluetooth connectivity adds modern convenience. Weaknesses are limited 133-minute local storage capacity, high price point, single-ear design that may not suit all preferences, and potential battery drain from Bluetooth and recording simultaneously.

Bottom Line:

Perfect for on-the-go professionals prioritizing convenience and noise cancellation over storage capacity. It’s a specialized tool that excels in mobile scenarios where traditional recorders are impractical.

6. Comulytic Note Pro AI Voice Recorder, Unlimited Transcribe & Summarize, One Tap Recording Device with AI Note Taking, Support 113 Languages, 64GB, Audio Recorder for Calls, Meetings, Lectures, Black

Overview: The Comulytic Note Pro positions itself as a premium AI-powered voice recorder designed for professionals who demand comprehensive documentation capabilities. This sleek device combines hardware excellence with cutting-edge AI models to capture, transcribe, and summarize conversations across 113 languages. With 64GB local storage and unlimited cloud backup, it promises to eliminate manual note-taking forever.

What Makes It Stand Out: Unlimited free transcription and summarization powered by ChatGPT-5.1 and Gemini 3.0 Pro sets this device apart from subscription-heavy competitors. The “360° Client Decoding” feature offers automated meeting summaries, to-do lists, and a vertical knowledge base tailored for specific professions like legal, real estate, and consulting. Its ultra-slim 3mm aluminum body with Gorilla Glass display achieves premium portability without sacrificing durability.

Value for Money: At $138.99, the upfront cost is significant, but the unlimited free AI services deliver exceptional long-term value compared to rivals charging $10-15 monthly. The 45-hour continuous recording and 107-day standby time ensure reliability during extended travel or back-to-back meetings. For sales professionals, consultants, and executives who transcribe hours weekly, this investment pays for itself within months.

Strengths and Weaknesses: Pros: Unlimited AI transcription with premium models; 113-language support; dual Wi-Fi/BLE connectivity for real-time sync; triple-mic array with AI noise reduction; 98% accuracy with professional terminology recognition; exceptional battery life.

Cons: High initial price point; may be overkill for casual users; reliance on specific AI models that could become outdated; premium features require learning curve.

Bottom Line: The Comulytic Note Pro is a powerhouse for serious professionals who prioritize accuracy and unlimited usage. If your workflow demands constant transcription of specialized content, this device justifies its premium price through eliminated subscription fees and superior AI integration.

7. AI Voice Recorder, Note Voice Recorder with Transcribe&Summarize, Al Noise Cancellation Technology, Two-Way Translation with APP, 80+ Languages, 64GB Memory, Recording Device for Interview, Meetings

Overview: This competitively-priced AI voice recorder targets productivity-focused users seeking smart transcription without breaking the bank. Leveraging GPT-5 AI, it transforms speech into structured text, summaries, and mind maps while offering two-way translation across 80+ languages. The 30g, 3mm-thin design makes it an unobtrusive daily companion for interviews, meetings, and lectures.

What Makes It Stand Out: The 120+ professional templates tailored to specific scenarios (meetings, calls, speeches) demonstrate thoughtful user experience design. Its unique bone pattern microphone combined with two precision mics captures crystal-clear audio, especially for phone calls. The privacy-first approach with on-device encryption and access-controlled cloud storage addresses growing data security concerns.

Value for Money: At $99.99, this recorder hits a sweet spot between basic digital recorders and premium AI devices. The generous 300 monthly free minutes accommodate moderate users, while the $9.99/month Pro plan remains affordable for heavy users. With 30-hour battery life and 64GB storage (1000 hours), the hardware specs match pricier alternatives, making it a budget-conscious choice.

Strengths and Weaknesses: Pros: Excellent price-to-performance ratio; AI noise cancellation technology; compact and lightweight; secure private storage; versatile app with professional templates; bone conduction mic for call clarity.

Cons: Limited to 80 languages versus competitors’ 113+; 300 free minutes may insufficient for power users; subscription required for unlimited use; no mention of premium AI models.

Bottom Line: Ideal for students, journalists, and mid-level professionals, this recorder balances capability and affordability. Choose it if you need reliable AI transcription with moderate monthly usage and prioritize data privacy over cutting-edge AI models.

8. IUNSONE Ai Voice Recorder,Voice Recorder with App Control,Powered Transcription&Summarization,Bidirectional Translation,64GB Memory for Lectures,Meetings,and Calls,Ideal for Students, Professionals

Overview: The IUNSONE AI Voice Recorder emphasizes global communication and security, featuring ChatGPT-4.1 integration and bidirectional translation capabilities. Weighing just 1.09 ounces with a magnetic attachment system, this device targets mobile professionals and students navigating multilingual environments. The 64GB storage holds 540 hours of audio, backed by ultra-fast 0.5-second cloud backups.

What Makes It Stand Out: The Vibration Conduction Sensor (VCS) technology captures both sides of conversations with exceptional clarity, automatically separating audio tracks. Real-time bidirectional translation and AI voice synthesis facilitate immediate cross-language communication. Security features like automatic saving during power loss and encrypted cloud storage appeal to privacy-conscious users handling sensitive information.

Value for Money: Pricing is not disclosed for the hardware, which is concerning. The software model offers 400 free monthly minutes, with Premium ($69.99/year) and Unlimited ($149.99/year) tiers. While the free allowance exceeds competitors, the unlimited plan is expensive. Value assessment hinges entirely on the missing device price, making it difficult to recommend without complete cost transparency.

Strengths and Weaknesses: Pros: Advanced VCS technology for call recording; bidirectional translation; lightweight magnetic design; robust security and backup; 90% global language coverage; automatic audio track separation.

Cons: Hardware price not listed; uses older ChatGPT-4.1 versus competitors’ newer models; unlimited subscription is costly; language count vague (“90% of global languages”).

Bottom Line: Wait for pricing details before considering this device. Its translation features and security make it compelling for international business travelers, but the opaque pricing and older AI engine suggest better value may exist elsewhere. Request full cost disclosure before purchasing.

9. Generative AI on Raspberry Pi 5: Build Private LLMs, Voice Assistants, and Autonomous Agents Locally

Overview: This technical guidebook empowers developers and AI enthusiasts to build private, offline AI systems using the affordable Raspberry Pi 5 platform. It provides step-by-step instructions for creating local LLMs, voice assistants, and autonomous agents without relying on cloud services or subscription fees. The book targets hobbyists, educators, and privacy-focused developers seeking hands-on AI implementation experience.

What Makes It Stand Out: Unlike consumer devices, this resource teaches true self-reliance in AI development. Building locally eliminates ongoing costs and data privacy concerns while demonstrating how modest hardware can run sophisticated models. The educational value extends beyond simple usage, offering deep understanding of AI architecture, model optimization for edge devices, and autonomous agent design principles.

Value for Money: At $39.99, this book delivers exceptional value for technically-inclined readers. The knowledge enables creating multiple AI projects for the one-time hardware cost of a Raspberry Pi 5 (under $100), versus recurring subscription fees of $120+ annually for commercial AI services. For STEM educators and students, it provides a curriculum-worthy foundation in practical AI deployment.

Strengths and Weaknesses: Pros: One-time cost with no subscriptions; complete data privacy; educational depth; hardware affordability; customizable solutions; no vendor lock-in; ideal for learning AI fundamentals.

Cons: Requires technical proficiency and time investment; Raspberry Pi 5 not included; troubleshooting may challenge beginners; lacks immediate out-of-box convenience; performance limitations versus cloud AI.

Bottom Line: Perfect for developers, tech-savvy professionals, and educators who value privacy and learning. If you’re comfortable with command-line interfaces and Python, this guide offers unmatched long-term value. Not recommended for non-technical users seeking plug-and-play solutions.

10. CurioCub AI Voice Assistant Alarm Clock for Kids for Ages 3-18 | Builds Daily Routines, Fights Procrastination with Visual Pomodoro Timer, Habit Coach & Sleep Aid Night Light

Overview: CurioCub reimagines the children’s alarm clock as an AI-powered developmental tool that grows with kids from toddler to teen. This 2.8-inch smart device combines time management, habit formation, and educational assistance through voice interaction. It transforms routine battles into engaging games while providing practical focus tools like a visual Pomodoro timer and soothing sleep aids.

What Makes It Stand Out: The age-spanning versatility (3-18 years) is remarkable, adapting from simple routine prompts for preschoolers to productivity coaching for high schoolers. The visual Pomodoro timer gamifies focus sessions, while AI-driven habit coaching turns chores into achievements. Integrated white noise, nature sounds, and bedtime stories create a comprehensive sleep environment, potentially reducing parental bedtime workload.

Value for Money: Priced at $35.99, CurioCub competes with basic smart speakers while offering specialized child-development features. It consolidates multiple devices (alarm clock, night light, white noise machine, habit tracker) into one unit. For parents seeking routine support, the time saved and habits built justify the cost, though durability concerns across a 15-year age range remain untested.

Strengths and Weaknesses: Pros: Wide age range adaptability; routine gamification; visual focus timer; comprehensive sleep aid library; hands-free parental liberation; educational AI interactions.

Cons: Screen time concerns for young children; privacy implications of kids’ data; reset procedure suggests software instability; effectiveness varies by child; limited AI capability versus full smart speakers.

Bottom Line: An innovative solution for parents struggling with morning and bedtime routines. Best for children aged 5-14 who respond to gamification. Carefully review privacy settings before use, and consider it a supplement rather than replacement for parental guidance. A thoughtful gift that delivers practical daily value.

What Makes Local AI Voice Assistants Different?

The distinction between cloud-dependent and local AI systems runs far deeper than where data gets processed. It’s a complete architectural overhaul that reimagines how artificial intelligence serves your home. Traditional voice assistants function like remote-controlled drones, constantly tethered to massive data centers that handle the heavy lifting of speech recognition and intent processing. Every “turn on the lights” request becomes a round-trip journey across the internet, introducing latency, privacy risks, and dependency on external infrastructure.

Local AI flips this model entirely. These devices house their own “brain”—dedicated processing units designed specifically for machine learning tasks. When you speak, your voice commands are converted to action within milliseconds, processed by neural networks that live on the device itself. This fundamental difference means your assistant continues working during internet outages, responds faster to commands, and never shares your voice patterns or routine data with third parties. The intelligence is embedded in your home, not rented from a distant corporation.

The Privacy-First Architecture

Privacy-first design isn’t a feature; it’s the foundation of local AI voice assistants. These systems employ edge computing principles that keep sensitive data processing confined to your local network. When your assistant learns that you typically ask for weather updates at 7:15 AM on weekdays, that pattern recognition happens in dedicated memory on the device, not in a server farm that could be accessed by employees, hackers, or government subpoenas.

This architecture typically includes hardware-level encryption for stored data, meaning even if someone physically stole your device, extracting your learned routines would require breaking enterprise-grade security. Many systems also implement “privacy zones”—areas of memory that are permanently isolated and cannot be accessed even for diagnostic purposes. The microphone arrays themselves often include physical kill switches that disconnect power at the circuit level, providing true assurance that no listening occurs when you want silence.

Understanding On-Device Processing

On-device processing represents a technical marvel of miniaturization and efficiency. Modern neural processing units (NPUs) can perform trillions of operations per second while consuming less power than a traditional light bulb. These chips are specifically designed for the parallel computations that make machine learning possible, allowing them to run complex models like transformer networks that were previously only feasible in massive data centers.

The real magic lies in model compression and optimization. Developers use techniques like quantization (reducing numerical precision) and pruning (removing unnecessary neural connections) to shrink sophisticated AI models by 90% or more without significant accuracy loss. Your offline assistant might run a 500MB compressed model that would have been 5GB in its original form, all while fitting into a device no larger than a traditional smart speaker. This compression enables real-time processing of natural language, speaker identification, and contextual understanding without cloud support.

How Offline Learning Actually Works

The learning process in local AI systems mirrors human habit formation—consistent repetition reinforces neural pathways until actions become automatic. When you first set up your offline voice assistant, it starts with a pre-trained base model that understands general speech patterns and common smart home commands. From there, it begins building a personalized overlay model based entirely on your interactions.

Every time you issue a command, the device logs not just the words, but the context: time of day, day of week, which room you’re in, what devices you typically control together, and even subtle voice characteristics. Over days and weeks, machine learning algorithms identify correlations. Perhaps you always turn on kitchen lights and start a playlist when you arrive home around 6 PM on weekdays. The assistant begins weighting these patterns, eventually suggesting or automatically executing the routine when it detects the trigger conditions.

Pattern Recognition Without the Cloud

Pattern recognition without cloud connectivity relies on sophisticated unsupervised learning algorithms running entirely on local hardware. These systems use clustering techniques to group similar commands and identify temporal patterns. Your assistant might discover that you have three distinct “morning routine” patterns—weekday early, weekday late, and weekend—all without being explicitly programmed to recognize these categories.

The device maintains a temporal memory buffer, typically covering 30-90 days of interactions, allowing it to detect seasonal shifts in your behavior. It might notice your “movie night” routine shifts earlier in winter months when daylight fades sooner. This pattern detection uses algorithms like Locality-Sensitive Hashing (LSH) that can find similarities in high-dimensional data efficiently on limited hardware. The result is an assistant that feels intuitive because it’s learned from your actual behavior, not from aggregated data of millions of users.

The Role of Edge Computing in Your Home

Edge computing transforms your voice assistant from a simple command executor into a distributed intelligence hub. In this architecture, your voice assistant becomes the primary compute node for your entire smart home, processing data from sensors, cameras, and other devices locally. When a motion sensor detects movement at 2 AM, the assistant can cross-reference this with your typical sleep patterns, recent voice commands, and security system status to determine whether to turn on pathway lighting or trigger an alarm.

This hub-and-spoke model reduces the computational load on individual smart devices while enabling complex multi-device automations that would be impossible if each gadget required cloud connectivity. Your assistant can process data from a dozen sensors simultaneously, running inference across multiple neural networks to make contextual decisions. The edge computing framework also enables device-to-device communication without routing through your router, creating a mesh network that remains functional even if your internet service provider fails.

Key Benefits of Keeping Your Data Local

The advantages of local AI extend far beyond simple privacy assurances. While keeping your data at home is the headline benefit, the practical improvements touch every aspect of your smart home experience. These systems fundamentally change the relationship between you and your technology, shifting from a service model to an ownership model where you control both the hardware and the intelligence it develops.

Response times improve dramatically when commands don’t need to travel to distant servers. A cloud-based assistant might take 1-3 seconds to process a command during peak usage times; local systems typically respond in 200-500 milliseconds. This near-instantaneous feedback feels more natural, like conversing with a person rather than issuing orders to a distant operator. The difference becomes especially noticeable when executing complex routines involving multiple devices, where local systems can orchestrate actions simultaneously rather than sequentially.

Enhanced Privacy and Security

Enhanced privacy in local AI systems operates on multiple levels simultaneously. First, there’s the obvious benefit: your voice recordings, command history, and learned routines never leave your property. But the security implications run deeper. These devices aren’t just avoiding data transmission; they’re actively protecting against surveillance capitalism’s business model, where your behavior patterns become a commodity to be bought, sold, and analyzed for advertising purposes.

Consider the scenario of a conversation about medical symptoms or financial planning. With cloud-based systems, even if the assistant isn’t actively recording, the wake word detection mechanism must constantly listen and process audio to detect its trigger phrase. Local AI devices perform this wake word detection using tiny, power-efficient models that can run entirely in isolated memory. There’s no audio stream being buffered or analyzed for “improvement purposes.” Many devices also implement visual indicators—physical LEDs hardwired to the microphone circuit—that make it physically impossible for the device to listen without your knowledge.

Uninterrupted Functionality During Outages

Internet outages reveal the fragility of cloud-dependent smart homes. When your connection drops, traditional assistants become expensive paperweights, unable to control lights, adjust thermostats, or execute any of the routines you’ve come to depend on. Local AI systems treat internet connectivity as an optional enhancement rather than a core requirement.

During an outage, these devices continue learning and adapting. They’ll notice that you manually flipped a light switch because the voice command failed, and they’ll incorporate this into their understanding of your preferences. When connectivity returns, they don’t need to “catch up” or sync missed data because nothing was missed—the intelligence remained active throughout. This resilience extends to scheduled routines; your “wake up” automation will trigger precisely at 6:30 AM whether your broadband is functioning or not, because the timing mechanism and command execution are entirely self-contained.

Reduced Latency and Faster Responses

The speed difference between local and cloud processing isn’t just measurable—it’s transformative for user experience. Local AI systems achieve sub-500 millisecond response times by eliminating network travel, server queueing, and round-trip authentication. This speed enables more natural interactions, like adjusting volume mid-sentence or interrupting a routine with a correction.

Consider a complex command: “Dim the living room lights to 30%, set the temperature to 72, and play my evening playlist.” A cloud system processes this sequentially—sending the command, waiting for acknowledgment, executing each action with separate API calls. Local systems parse the entire command holistically, identifying the three distinct actions and executing them simultaneously through direct device communication. The result feels instantaneous, like the home itself is responding to your thoughts rather than your words. This responsiveness also enables more sophisticated voice controls, like whisper detection for nighttime commands or recognition of commands spoken over background noise.

Essential Features to Evaluate

When shopping for a local AI voice assistant, the specifications that matter differ significantly from cloud-based systems. You’re not just buying a microphone and speaker; you’re investing in a self-contained computer that will serve as your home’s intelligence center for years. The hardware decisions made by manufacturers directly impact how well the device can learn, adapt, and respond to your unique lifestyle.

Processing power tops the list of considerations. A device with insufficient computational capacity will struggle to run sophisticated learning models, resulting in slower responses and limited ability to handle complex routines. Memory is equally crucial—both RAM for active processing and storage for retaining learned patterns. A device that can’t store at least 6-12 months of interaction data will reset its learning periodically, preventing it from understanding seasonal variations in your behavior.

Processing Power and Neural Engine Specifications

The heart of any local AI assistant is its neural processing unit (NPU) or AI accelerator. Look for devices that specify tera-operations per second (TOPS) ratings—8 TOPS or higher indicates sufficient power for real-time natural language processing and concurrent learning. Some systems combine a general-purpose CPU with a dedicated NPU, allowing the CPU to handle system tasks while the NPU focuses entirely on AI inference.

Clock speed alone doesn’t tell the full story. A 2GHz CPU without AI acceleration might struggle where a 1.5GHz CPU with a dedicated NPU excels. Pay attention to thermal design power (TDP) as well—devices that run hot under load may throttle performance, causing inconsistent response times. The best systems use passive cooling or whisper-quiet fans to maintain peak performance 24/7. Also consider the architecture: ARM-based chips often provide better power efficiency for always-on devices, while x86 architectures might offer more raw processing power at the cost of higher energy consumption.

Local Storage Capacity for Learning Models

Storage capacity determines how much your assistant can learn and remember. Base models typically require 2-4GB of space, but the real storage hog is the personalized learning data. Each voice profile, routine pattern, and device interaction history consumes space. A device with only 8GB of total storage might fill up within a year, forcing it to discard older patterns and lose its understanding of your seasonal routines.

Aim for devices with at least 32GB of fast eMMC or NVMe storage, with 64GB providing comfortable headroom for years of learning. Some premium systems include expandable storage via microSD or USB, allowing you to retain decades of interaction history. This matters because long-term pattern analysis reveals insights that short-term data cannot—like how your routines evolve as children grow or work schedules change. The storage should also be encrypted at the hardware level, ensuring that even physical access doesn’t compromise your privacy.

Microphone Array Quality and Noise Cancellation

Microphone technology makes or breaks the voice assistant experience, especially for offline systems that can’t fall back on cloud-based noise filtering. High-quality devices use beamforming arrays of 6-8 microphones that can pinpoint your location in a room and isolate your voice from background noise. This spatial awareness also enables room-specific commands—“turn off the lights in here” works because the assistant knows which room you’re occupying.

Look for specifications like signal-to-noise ratio (SNR) above 65dB and acoustic echo cancellation (AEC) that can handle playback from the device’s own speakers. Far-field recognition should work reliably at 15-20 feet, even with music playing or appliances running. Some advanced systems include ultrasonic presence detection, allowing the assistant to wake before you even speak by detecting your approach. The microphone array should also support multiple simultaneous wake words, letting different family members use personalized activation phrases.

Customization and Training Interfaces

The ability to teach your assistant directly impacts how well it adapts to your life. Effective local AI systems provide transparent training interfaces where you can review what the device has learned, correct misunderstandings, and manually create complex triggers. Look for companion apps that show visual timelines of your routines, allowing you to drag and drop to adjust timing or add conditions.

Voice training should go beyond simple wake word repetition. The best systems let you record example phrases for specific commands, teaching the assistant how you naturally speak. You might say “it’s movie time” instead of “turn off lights and turn on TV,” and the assistant learns to associate your casual phrase with the full routine. Some devices also support gesture training, learning to recognize hand signals or even facial expressions through optional camera modules. The interface should make it easy to export your trained models for backup or migration to newer hardware, ensuring your investment in teaching the assistant isn’t lost when you upgrade.

Technical Infrastructure Requirements

Deploying a local AI voice assistant successfully demands more than just plugging in a device. Your home’s technical infrastructure plays a crucial role in determining how effectively the system can learn and control your environment. A poorly configured network can introduce latency that negates the benefits of local processing, while inadequate power protection can corrupt the learning models you’ve spent months developing.

Start by evaluating your home network’s topology. Local AI assistants perform best when they can communicate directly with smart devices over your local network without routing through your internet gateway. This requires a modern router that supports multicast DNS (mDNS) and has sufficient internal bandwidth to handle dozens of simultaneous device connections. Mesh Wi-Fi systems can help eliminate dead zones where voice commands might fail, but ensure the mesh nodes support wired backhaul to prevent wireless congestion from slowing device-to-device communication.

Home Network Considerations

Your network should prioritize local traffic using quality of service (QoS) settings that give preference to device control packets over general internet traffic. Consider creating a separate VLAN (virtual local area network) for your smart home devices, isolating them from your personal computers and phones for security while allowing the voice assistant to bridge between networks. This segmentation prevents a compromised IoT device from accessing your sensitive data while maintaining the assistant’s ability to control everything.

Bandwidth requirements are surprisingly modest for offline operation—most devices need less than 1Mbps for routine function since audio processing happens locally. However, firmware updates and model improvements can require temporary bursts of 10-50Mbps. Ensure your network can handle these peaks without interrupting other activities. For maximum reliability, connect your voice assistant hub directly to your router via Ethernet, even if it supports Wi-Fi. This eliminates wireless interference that could cause missed commands or delayed responses during critical moments.

Power Backup and Redundancy

Power stability directly impacts your assistant’s ability to learn consistently. Frequent outages or brownouts can corrupt the learning models stored in flash memory, forcing you to retrain from scratch. Invest in an uninterruptible power supply (UPS) with pure sine wave output and at least 30 minutes of runtime. This isn’t just for the voice assistant—it’s for your router, modem, and critical smart home devices like smart locks and thermostats.

Look for UPS units with USB or network connectivity that can signal the assistant when running on battery power. This allows your assistant to automatically enter a power-conservation mode, disabling non-essential functions while maintaining core voice recognition and security routines. Some advanced setups use dual power supplies for the assistant hub, switching seamlessly between utility power and battery backup. Consider also installing a whole-home surge protector at your electrical panel to guard against voltage spikes that could damage the sensitive electronics in your AI hub and connected devices.

Integration With Your Smart Home Ecosystem

A voice assistant, no matter how intelligent, provides limited value if it can’t communicate with your existing devices. Local AI systems face a unique challenge: they must support the same wide range of protocols as cloud-based assistants but without relying on manufacturer servers as intermediaries. This requires direct implementation of communication standards and often means more complex initial setup in exchange for greater long-term reliability.

The integration landscape centers on several key protocols: Zigbee, Z-Wave, Thread, and Matter for device-to-device communication; Wi-Fi for high-bandwidth devices; and Bluetooth for direct phone pairing. A truly capable local AI hub should include radios for at least three of these protocols, preferably with firmware-upgradeable support for emerging standards. The Matter protocol is particularly important as it enables local control of compatible devices regardless of manufacturer, creating a unified ecosystem that doesn’t depend on cloud services.

Compatibility Standards Matter

When evaluating compatibility, dig deeper than “works with” badges on packaging. Investigate whether the integration is cloud-dependent or truly local. Some devices claim to support local control but only expose basic functions—on/off commands might work offline while advanced features like color temperature adjustment or sensor calibration still require cloud access. Look for community-verified compatibility lists where users have tested functionality during internet outages.

The best local AI assistants support open APIs and publish their integration protocols, allowing community developers to create support for obscure or older devices. This open approach future-proofs your investment; even if a manufacturer abandons a product, the community can maintain integration. Check whether the assistant supports importing device configurations from open-source platforms like Home Assistant, which provides thousands of local integrations. This compatibility layer can bridge the gap between your AI assistant and devices that don’t natively support local control.

Creating Automation Rings and Routines

Local AI excels at creating sophisticated automation “rings”—interconnected routines that adapt based on multiple triggers and conditions. Unlike cloud-based systems that often limit you to simple “if this, then that” logic, offline assistants can process multiple variables simultaneously. Your “goodnight” routine might check that all doors are locked, windows are closed, the garage is secure, and no motion has been detected in the kids’ rooms for 30 minutes before dimming lights and arming the security system.

The learning aspect becomes powerful here. The assistant might notice you always manually override the thermostat after the goodnight routine runs, learning that you prefer it cooler than your initial setting. It will eventually incorporate this preference automatically. Look for systems that support nested conditions, time-based variables, and device state queries. The interface should allow you to create routines that span days—like a vacation mode that learns to simulate your presence by replaying learned lighting and audio patterns with natural variations.

The Learning Curve: What to Expect

Setting expectations for the learning process prevents frustration and helps you maximize your assistant’s capabilities. Unlike cloud-based systems that come pre-trained on millions of user interactions, local AI assistants start with a general understanding and must learn your specific patterns from scratch. This creates a more personalized result but requires patience during the initial weeks.

The first 72 hours are crucial. During this period, the assistant is building baseline models of your voice, your home’s acoustics, and your most frequent commands. It may seem less capable than your old cloud assistant at first, missing commands or asking for clarification more often. This is normal and necessary—the device is being cautious to avoid learning incorrect patterns. Think of it as training a new employee who asks questions to understand your preferences rather than making assumptions.

Initial Training Periods and Calibration

Most local AI assistants include an explicit training mode where you can run through common commands to accelerate learning. Spend 15-20 minutes during setup speaking various phrases for each intended action. Don’t just say “turn on the lights”—say “lights on,” “illuminate the room,” “it’s too dark,” and other natural variations. This diversity helps the assistant build robust understanding rather than memorizing specific phrases.

Calibration extends beyond voice recognition. The assistant needs to learn ambient noise patterns: the hum of your HVAC, the dishwasher’s cycle, street noise at different times of day. Many devices include a “listening period” where they passively monitor your environment without executing commands, building an acoustic model of your home. This phase typically lasts 3-7 days and significantly improves recognition accuracy. Resist the urge to disable features during this period—the assistant is gathering the contextual data it needs to serve you better.

How Routines Evolve Over Time

The evolution of learned routines follows a fascinating trajectory. Initially, the assistant identifies simple correlations: “User says ‘good morning’ between 6:30-7:00 AM, then requests kitchen lights and weather.” After a few weeks, it recognizes exceptions: “On Tuesdays, ‘good morning’ happens at 5:45 AM, and the request includes traffic information.” Over months, it develops meta-patterns: “User’s morning routine shifts 30 minutes earlier on days following late-night calendar events.”

This evolution isn’t linear. You might notice the assistant suggesting routines that seem premature or incorrect. This indicates it’s testing hypotheses—if you reject the suggestion, it learns the boundary of that pattern. If you accept, it reinforces the learning. The most sophisticated systems include confidence scoring, only making automatic suggestions when they’re 90%+ certain of the pattern. You can usually adjust this threshold, making the assistant more proactive or conservative based on your comfort level.

Privacy and Data Ownership Considerations

True data ownership means more than keeping information local—it means having complete control over what gets recorded, how long it’s retained, and how it can be used. Local AI systems should provide transparent dashboards showing exactly what data exists on the device, down to individual voice clips and learned patterns. This transparency allows you to audit your assistant’s memory and delete specific interactions without wiping the entire learning history.

Consider the implications of data retention policies. A system that stores everything indefinitely might eventually run out of space or become a liability if the device is stolen. Conversely, aggressive deletion policies might prevent the assistant from recognizing long-term patterns. The ideal approach is tiered retention: detailed interaction logs kept for 30 days, summarized patterns retained for 12 months, and meta-patterns (like seasonal behaviors) stored indefinitely. You should be able to adjust these timelines and export your data in standardized formats.

Understanding Data Retention Policies

Examine how the assistant handles voice recordings. The best systems perform immediate processing, converting speech to text locally and then discarding the audio unless you explicitly opt to save it for training purposes. If audio is stored, it should be encrypted with keys that never leave the device. Some systems use differential privacy techniques, adding statistical noise to stored patterns so that individual commands can’t be identified while preserving overall behavioral trends.

Pay attention to what happens during device servicing or replacement. Reputable manufacturers provide tools to cryptographically erase all personal data, rendering it unrecoverable even with forensic tools. When migrating to a new device, look for systems that allow encrypted transfer of learned models directly between units over your local network, never exposing raw data to the manufacturer. Avoid devices that require uploading your data to a “migration service”—this defeats the purpose of local processing.

Firmware Updates and Model Improvements

Firmware updates for local AI devices walk a fine line between improving functionality and preserving your learned data. Updates should be cryptographically signed and delivered as differential packages that modify only the base AI model, leaving your personalized learning layer intact. The best systems perform A/B testing of new models, running them in parallel with the old version and only switching over if the new model performs better on your specific usage patterns.

Be wary of updates that require “retraining” or “relearning” your routines—these indicate the manufacturer doesn’t understand how to preserve personalized models. Quality systems publish detailed changelogs explaining what aspects of the AI are being updated and how this might temporarily affect recognition accuracy. Some even allow you to roll back updates if the new version performs worse for your specific voice or routines. Schedule updates during low-usage periods, as the installation process may require rebooting the device and temporarily pausing active learning.

Cost Analysis and Value Proposition

The financial investment in local AI voice assistants typically exceeds cloud-based alternatives, but the calculation changes when considering total cost of ownership over a 5-7 year lifespan. Entry-level local AI hubs start around $200-300, while premium systems with advanced neural processors can reach $500-800. This compares to $50-150 for basic cloud assistants, but the comparison misses crucial value differences.

Factor in the absence of subscription fees. Many cloud assistants require monthly payments for advanced features like routine scheduling, device grouping, or voice recognition for multiple family members. These fees can total $60-120 annually, making a $300 local device cost-neutral within 2-3 years. Additionally, local systems often have longer usable lifespans—cloud assistants become obsolete when manufacturers discontinue server support, while local devices continue functioning indefinitely as long as the hardware remains viable.

Initial Investment vs. Long-Term Value

The long-term value proposition centers on data ownership and ecosystem stability. With local AI, you’re purchasing a physical asset that retains value. A well-maintained local hub can be resold, and the learning models you’ve developed can transfer to new hardware. Cloud-based systems are essentially rentals; you gain no equity despite years of payments and data contribution.

Consider also the hidden costs of cloud dependency. Internet service upgrades to handle dozens of cloud-connected devices, cellular backup services for outage protection, and premium router features for traffic prioritization all add to the true cost of cloud assistants. Local systems thrive on basic internet connections and standard networking equipment. Calculate your break-even point by adding up these ancillary costs—most homeowners find that local AI becomes economically advantageous within 18-24 months, even before considering privacy benefits.

Subscription Models and Hidden Costs

Scrutinize subscription models carefully. Some local AI manufacturers offer optional cloud services for remote access or advanced analytics, but these should truly be optional. Avoid systems where core learning features or device integrations require ongoing payments. The ideal model is a one-time purchase with optional paid upgrades for major new capabilities, not a service fee for basic functionality.

Hidden costs often appear in device compatibility. A local hub might require purchasing new smart home devices that support local control, as your existing cloud-dependent gadgets may not function fully offline. Budget for gradual replacement of incompatible devices, prioritizing those that support Thread and Matter protocols. Some manufacturers offer trade-in programs or discounts when migrating from cloud-dependent ecosystems, softening this transition cost. Factor in the time investment as well—local systems require more upfront configuration but save countless hours of troubleshooting cloud connectivity issues over their lifetime.

Troubleshooting and Maintenance

Even the most sophisticated local AI assistants encounter issues that require intervention. Unlike cloud systems where problems often resolve themselves server-side, local devices need your direct involvement to diagnose and fix issues. This isn’t a drawback—it’s a trade-off that gives you control and transparency. When something goes wrong, you can investigate rather than waiting helplessly for a faceless corporation to address it.

Common issues include microphone degradation over time, storage fragmentation that slows learning model updates, and interference from newly added electronic devices. Establish a monthly maintenance routine: check for firmware updates, review the assistant’s learning dashboard for anomalies, and physically inspect the device for dust accumulation that could affect microphone performance. Many systems include diagnostic modes that test microphone arrays, network connectivity, and processing speed, providing baseline metrics you can compare over time to detect degradation.

Common Offline Learning Challenges

One frequent challenge is the “overfitting” problem, where the assistant becomes too specialized to your patterns and fails to handle legitimate variations. You might normally say “goodnight” at 10 PM, but when you say it at 8 PM during an illness, the assistant might not recognize the command because it falls outside the learned time window. Combat this by occasionally issuing commands in different ways or at unusual times, giving the assistant diverse data points that build flexibility.

Another issue is “catastrophic forgetting,” where learning new patterns causes the assistant to lose previously learned behaviors. This occurs when storage is limited and new data overwrites old patterns. If you notice the assistant forgetting established routines, check storage capacity and consider enabling “pattern protection” features that lock in well-established behaviors. Some systems allow you to manually weight certain routines as high-priority, preventing them from being displaced by newer, less frequent commands.

When to Reset and Retrain Your Assistant

Knowing when to reset is as important as knowing how to train. A full reset should be rare—perhaps when changing households or after a major lifestyle shift like retirement that fundamentally alters your daily patterns. Partial resets are more common: clearing specific routines that no longer apply, removing voice profiles of former household members, or pruning old device integrations.

Before resetting, always export your learned models and configuration. Even if you’re starting fresh, having the old data as reference helps you rebuild routines more efficiently. The best time for a reset is during a period of predictable routine, like a regular work week, when you can spend 30 minutes each day for a week retraining core functionalities. Avoid resetting during holidays or vacations when your behavior is atypical. After resetting, resist the urge to immediately restore old configurations—take the opportunity to rebuild intentionally, incorporating only the routines you actually used rather than those you thought you wanted.

Frequently Asked Questions

How much internet bandwidth do local AI voice assistants actually need?

Local AI assistants require minimal internet connectivity—typically less than 1Mbps for basic operation since all voice processing happens on-device. You’ll only need bandwidth for occasional firmware updates and time synchronization. During internet outages, the assistant continues functioning fully, controlling devices over your local network. Some advanced features like remote access or weather updates require internet, but core voice recognition and routine execution remain completely offline.

Can offline voice assistants distinguish between different family members’ voices?

Yes, modern local AI systems excel at speaker identification without cloud support. During setup, each family member records a short voice sample that creates a unique vocal fingerprint stored locally. The assistant uses these models to personalize responses, access individual calendars, and enforce parental controls. Some systems can identify up to 6-8 distinct voices with 95%+ accuracy, even with colds or voice strain, by focusing on vocal tract characteristics that remain consistent.

What happens to my learned routines if the device breaks or I upgrade?

Reputable local AI systems include encrypted backup and migration tools. You can export your learned models to a USB drive or network storage, creating a snapshot of your assistant’s understanding. When replacing hardware, import this data directly to the new device over your local network. The best systems maintain backward compatibility for at least three generations, ensuring your training investment isn’t lost. Always verify migration capabilities before purchasing, as some devices lock learned data to specific hardware.

How long does it take for a local AI assistant to learn my routines accurately?

Most users see noticeable improvements within 7-10 days as the assistant learns basic patterns. Deep understanding of nuanced behaviors typically emerges after 4-6 weeks of consistent interaction. Seasonal patterns may take 3-6 months to fully integrate. The learning never truly stops—the assistant continuously refines its models—but the steepest improvements happen in the first month. Accelerate the process by using the training mode and maintaining consistent routines during the initial period.

Are offline voice assistants as capable as cloud-based ones for complex commands?

For device control and routine execution, local AI often exceeds cloud assistants in speed and reliability. However, they may lag in general knowledge questions since they can’t access real-time internet databases. The trade-off is specialization: local assistants become experts at your home and habits while cloud assistants remain generalists. For smart home control, local AI is superior. For asking about celebrity birthdays or sports scores, you’ll need internet access or a hybrid approach.

Can I access and review the data my assistant has learned about me?

Quality local AI systems provide transparent data dashboards showing learned patterns, voice samples, and routine histories. You can typically view this through a secure local web interface or companion app that connects directly to the device. The data should be presented in human-readable formats, showing patterns like “Most frequent command: ’turn off bedroom lights’ (47 times, 10:00-10:30 PM).” You can delete specific entries or entire categories of learning without affecting unrelated patterns.

Will my local AI assistant work with all my existing smart home devices?

Compatibility depends on the protocols your devices support. Devices using Zigbee, Z-Wave, Thread, or Matter typically work well locally. Many Wi-Fi devices designed for cloud control may have limited offline functionality. Check community-maintained compatibility databases before purchasing. Some local hubs can bridge cloud-only devices, but this introduces latency and potential failure points. Plan to gradually replace cloud-dependent devices with locally controllable alternatives for maximum reliability.

What’s the power consumption difference between local and cloud-based assistants?

Local AI assistants typically consume 5-15 watts continuously, slightly higher than basic cloud assistants (3-8 watts) due to on-device processing. However, this difference is offset by reduced network activity—cloud assistants maintain constant connections, frequently transmitting keep-alive signals. Over a year, expect $10-20 higher electricity costs for a local system. The premium supports continuous learning and instant response capability. Some devices include eco-modes that reduce power consumption during predictable sleep hours when voice interaction is unlikely.

Can I take my local AI assistant with me when I move to a new house?

Yes, and your learned routines transfer with you. The assistant will need to relearn room layouts and device locations, but its understanding of your timing preferences, voice patterns, and general routines remains intact. Some systems include “moving mode” that preserves behavioral patterns while resetting location-specific data. The acoustic model of your voice and core routine structures (like morning sequences) transfer seamlessly, making the retraining process in your new home much faster than initial setup.

How often do I need to update the AI models and firmware?

Most manufacturers release firmware updates quarterly, with critical security patches delivered as needed. AI model updates are less frequent—typically every 6-12 months—and should be optional since your assistant continues learning independently. Enable automatic updates for security patches but review AI model updates before installing, as they can temporarily affect recognition accuracy. The best systems perform updates during low-usage periods and create restore points, allowing rollback if issues arise. Plan to spend 30 minutes every 3-4 months reviewing and applying updates.